The role of open universities post-Covid

https://www.youtube.com/watch?v=0OGoH1q4Kms

Anadolu University

Anadolu University in Turkey is nominated by the Turkish Higher Degree Act of 1981 as the national provider of distance education. Enrollment in...

Webinar: What is Online Learning Post-Pandemic?

What

As organizations and institutions look forward to post-pandemic offerings, many are examining to what degree online learning will factor into their daily operations as...

Book Review: Reimagining Digital Learning for Sustainable Development

Jagannathan, S. (ed.) (2021) Reimagining Digital Learning for Sustainable Development: How Upskilling, Data Analytics and Educational Technologies Close the Skills Gap Routledge: New York/London,...

The limits of online learning in a dystopian future: a discussion

Murgatroyd, S. (2021) The precarious futures for online learning Revista Paraguaya de Educación a Distancia, FACEN-UNA, Vol. 2, No. 2

Stephen Murgatroyd is a big...

Staying Online: a new book by Robert Ubell

Ubell, R. (2022) Staying Online: How to Navigate Digital Higher Education New York/London: Routledge, 180 pp

This book was published on September 7, 2021, and...

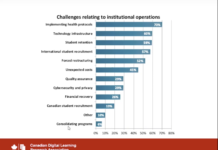

Decision-making in uncertain times: what recent research into online learning indicates

CDLRA Conversations: Decision-Making in Uncertain Times, September 6, 2021

The CDLRA (Canadian Digital Learning Research Association) is in the process of analysing the results from...

Five core trends in teaching and learning post-Covid 19

If you have 15 minutes to spare, take a look at a (virtual) presentation I made to the University of Kent in the UK...

Post-Pandemic Lesson 3. We know how to do quality online and blended learning, but...

This is the third of 10 Lessons from a Post-Pandemic World. For the other nine, click here.

“We know how to do quality online and...

Five free keynotes on online learning for streaming into virtual conferences

Sleepless nights and global learning

I received many requests last year to deliver keynotes into virtual conferences. These requests came from all around the world....

How to address the skills agenda in higher education: a German perspective

Ehlers, U-D. (2020) Future Skills: the future of learning and higher education Karlsruhe, Germany: Herstellung und Verlag, pp. 311

What is this book about?

This free,...