Book Review: Reimagining Digital Learning for Sustainable Development

Jagannathan, S. (ed.) (2021) Reimagining Digital Learning for Sustainable Development: How Upskilling, Data Analytics and Educational Technologies Close the Skills Gap Routledge: New York/London,...

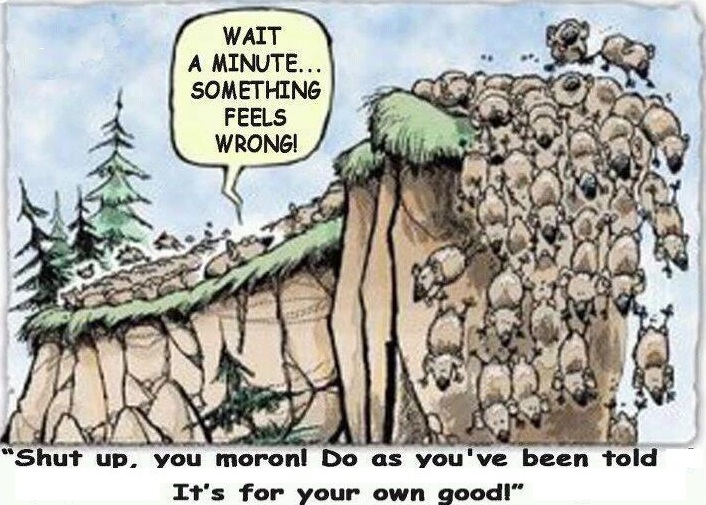

Corruption in higher education: a wake-up call

Daniel, J. (2016) Combatting Corruption and Enhancing Integrity: A Contemporary Challenge for the Quality and Integrity of Higher Education: Advisory Statement for Effective International...

Online learning in 2012: a retrospective

Well, 2012 was certainly the year of the MOOC. Audrey Watters provides a comprehensive overview of what happened with MOOCs in 2012, so I...

Massive growth of online learning in Asia

Adkins, S. S. (2012) The Asia Market for Self-paced eLearning Products and Services: 2011-2016 Forecast and Analysis Ambient Insight, October

In all the hoopla about MOOCs,...

Who has the richest professors? Canada!?

Jaschik, S. (2012) Faculty Pay, Around the World, Inside Higher Education, March 22

Philip Altbach, Liz Reisberg, Maria Yudkevich, Gregory Androushchak, Iván Pacheco (in press) Paying...

Key points from OECD’s 2011 ‘Education at a Glance’

OECD (2011) Education at a Glance: OECD Indicators Paris: OECD

For those of you interested in educational statistics (and for masochists in general), here's your...

Webinar on Chinese online teaching

Title: The Chinese Top Level Courses: Improving the quality of online courses in a new educational climate

The next presentation in the series of...

China’s telecommunications activities in Africa

Marshall, A. (2011) China’s Mighty Telecom Footprint in Africa e-Learning Africa News Portal, February 15

For those interested in infrastructure for e-learning, this extensive article...

Interpreting international comparisons in academic achievement

OECD (2010) OECD Program for International Assessment Paris: OECD

The media, worldwide, have been having a field day interpreting the latest OECD PISA results, which...

Comparing Chinese and British HE systems through their distance teaching universities

Wei, Runfang (2008) China's Radio and TV Universities and the British Open University: A Comparative Study Nanjing China: Yilin Press

This has been sitting on...