Wise, A. F. & Bergner, Y. (2020) College in the Time of Corona: Spring 2020 Student Survey New York, NY: NYU-LEARN.

This is another ‘quick-and-dirty’ survey about U.S. university students’ responses to the Covid-19 pandemic. This one was conducted between March and June, 2020, to find out ‘what students found worked, what didn’t and how they experienced the shift‘. For a full list of studies, see Research reports on Covid-19 and emergency remote learning.

Methodology

The survey was conducted by New York University’s Learning Analytics Research Network.

University students in the United States were invited to participate via email and social media, resulting in a convenience sample of 298 respondents, from 50 different institutions, although 39% were from New York University. Their results though were not significantly different from the results for other students.

Responding students were relatively evenly distributed across different fields of studies, but were predominantly female (71%) and undergraduate (60%).

Main results

I am summarising the main numerical results, but the report also contains interesting qualitative responses from students, so once again I recommend that you read the actual report.

Tools used by students

- Video conference tools (such as Zoom): 88%

- Learning management systems: 72% (57% own institution’s; 15% another platform)

- Collaborative editing tools (e.g. Google Docs): 57%

- Messaging tools (e.g. Slack): 26%

- Own computer/laptop: 96%

- Mobile devices (tablets, phones): 14%

Students reported that video conferencing tools worked ‘reasonably well’ for lectures, but students often commented that there was an over-reliance on Zoom as a platform for learning. It is interesting to note that nearly a third did not access or were not offered a learning management system.

Main technology challenges

-

internet connectivity, with students reporting problems due either to slow connections or lack of access from a location that was appropriate for their studies

Quality of learning experience

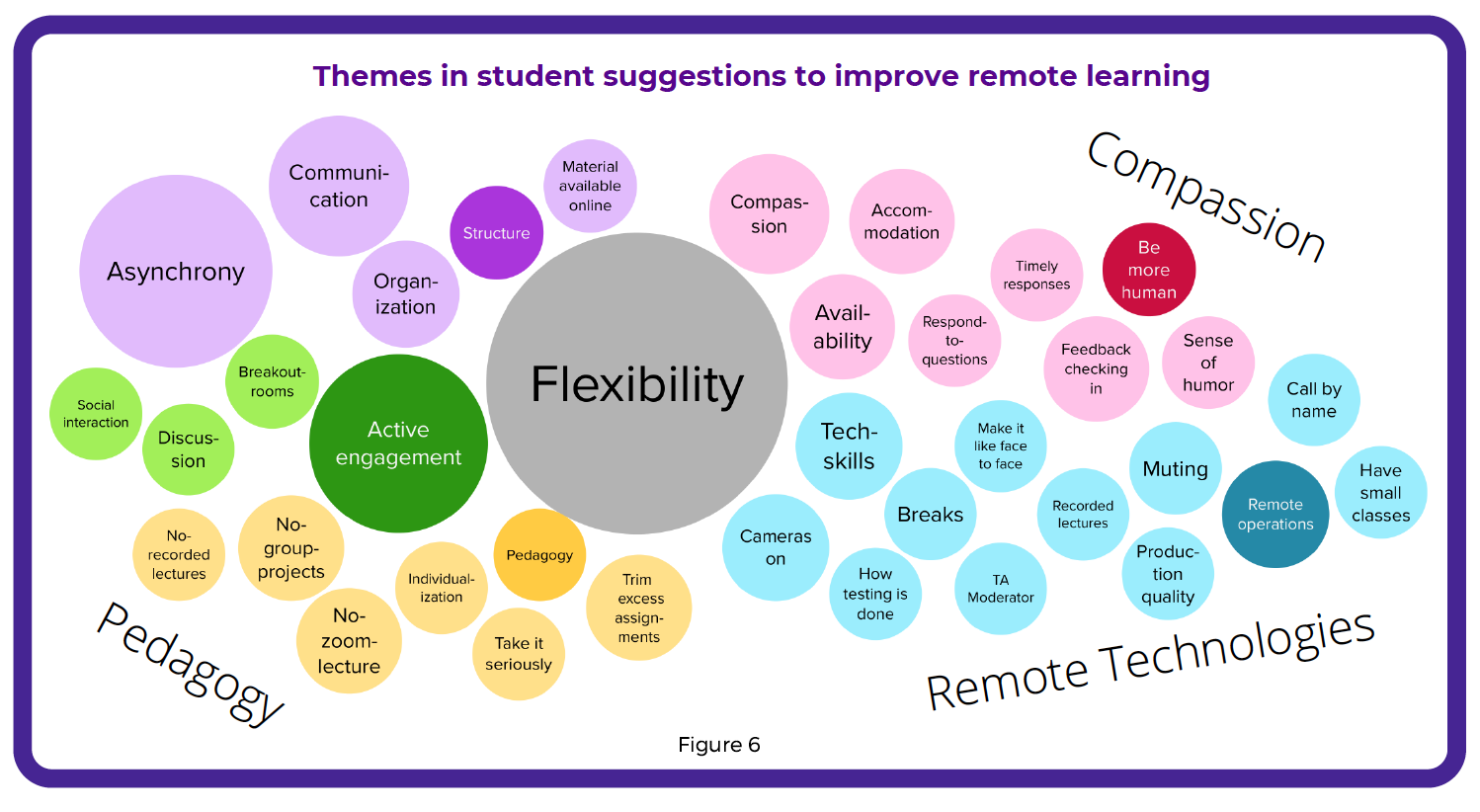

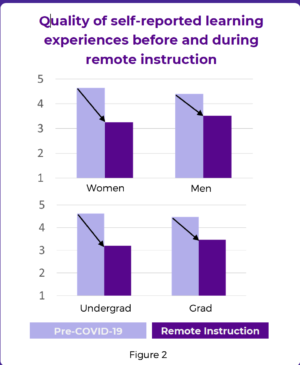

This was probably the most significant result, summarised in Figure 2 of the report:

This is a ‘half empty or half full’ result: emergency remote learning was significantly not as satisfying a learning experience as pre-Covid learning, but neither was it disastrous, and indeed it improved over time as the situation stabilized over April and May. Clearly, students needed time to adjust, with many students understandably anxious at the beginning, but they became more accepting (but not enthusiastic) as time went on. Missing deadlines was a particular concern, as many students were coping with many other changes in their lives at the same time. Students also reported missing in-person contact with other students and the support they receive in this way.

What worked and what didn’t

….the most important thing to students was that faculty adjusted the course and expectations for the shift online and that they offered flexibility to students who needed it. This was both the most highly praised quality of instructors who were seen to be doing well with the transition and the biggest critique of those who were not

The lack of informal interactions with other classmates was a critical dimension missing for many students online.

‘Live’ lectures were a problem for many students; there was general agreement in the value of recorded lectures in comparison. The three most important elements identified as necessary in remote learning by students were:

- flexibility

- active engagement

- asynchronicity (see Figure 6 above)

Students also reported the need for clear expectations and communication about what they are supposed to do, good course organisation and structure, and use of technology features that allow for engagement and interaction (this could have been straight out of Chapter 12 of my book, Teaching in a Digital Age). In other words, video lectures need to be supplemented with other activities to keep students engaged and motivated.

My comments

It is very useful to get student perceptions of how emergency remote learning worked for them, but some element of caution is needed here. As this study clearly indicates, students differed considerably in their reactions to emergency remote learning, and instructors varied considerably in their effectiveness in lecturing online. Students’ responses were mainly focused on improving the video lecture experience. It is not reasonable to expect students to consider alternative ways of delivering remote learning – they were responding to what they were offered.

What does come through clearly though are the flaws in online lecturing. In the end, re-thinking the approach to teaching is really essential when students are isolated, working on their own, and under other pressures, such as parental care, working from home, or poor study conditions.

At the same time, this is a study of the initial emergency response, when there simply was not the time to re-think course design. However, it is to be hoped that over the summer, many more courses will have been re-designed for the fall semester. It will be interesting to see the extent to which this has happened, and how or whether students’ responses change as a result.

Lastly, I was surprised that learning analytics were not used in this study, given the authors’ background. I would have thought that this could be a very useful methodology for such a topic – but maybe that is happening somewhere.

Dr. Tony Bates is the author of eleven books in the field of online learning and distance education. He has provided consulting services specializing in training in the planning and management of online learning and distance education, working with over 40 organizations in 25 countries. Tony is a Research Associate with Contact North | Contact Nord, Ontario’s Distance Education & Training Network.

Dr. Tony Bates is the author of eleven books in the field of online learning and distance education. He has provided consulting services specializing in training in the planning and management of online learning and distance education, working with over 40 organizations in 25 countries. Tony is a Research Associate with Contact North | Contact Nord, Ontario’s Distance Education & Training Network.

Excellent article differentiating from what we are presently seeing as “emergency remote teaching” and “online teaching”. A useful basis for reflection for anyone who has an interest in where we are today with HE teaching & learning and where we are going.

In a situation where there is no other option, I think this emergency remote learning model works well. But so far it is not as effective as standard learning. At least, for me.

As far as I can see, most students are well-resourced and universities provide the assistance they need.